A couple of weeks ago over at FlowingData, Nathan Yau wrote a post about how to improve government data sites. The post was mostly a constructive critique of the difficulties users have extracting and using data provided by the federal government. (Surely state and local governments create similarly poor interfaces). It’s not that I disagree with Nathan, but I think it’s worth digging a little deeper into why government web sites and data sets aren’t particularly user-friendly.

Having worked at a government agency for nearly a decade and spoken to countless agencies about data visualization, presentation techniques, and technology challenges over the past few years, I thought I might add my own perspective.

In his post, Nathan suggests three reasons why government data sites are inexcusably poor:

Maybe the people in charge of these sites just don't know what's going on. Or maybe they're so overwhelmed by suck that they don't know where to start. Or they're unknowingly infected by the that-is-how-we've-always-done-it bug.

In my experience, government web sites aren’t difficult to use or extract data from because government workers don’t “know what’s going on” or are “overwhelmed by suck.” The real answer is probably closer to the “that-is-how-we’ve-always-done-it bug”—but even that simplifies a more complicated story.

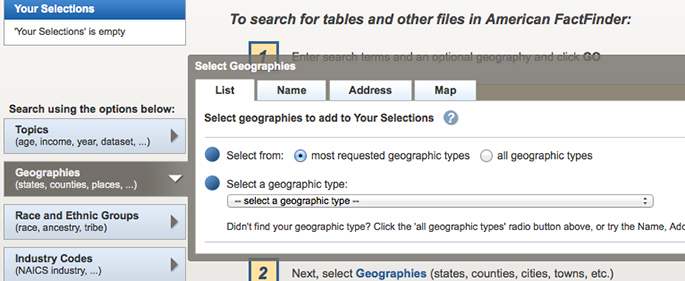

Let’s say for the moment that you work at a large government agency and your job is to process a large household survey and make it available to the public (think, say, the Census Bureau). Up until the past couple of years or so, your target audience was other government workers, academics, and researchers in similar fields. And most of those analysts use tools similar to the ones you’re using: Stata, SAS, SPSS, MATLAB, maybe a little Fortran or C++. So what do you do? You create a data file so that they can download it, unpack it, and analyze it using those programming languages. Your primary audience is not journalists (data-driven journalism had not yet taken off) or bloggers (in-depth data blogging was just beginning) or data scientists (the term didn’t even exist).

Now, however, with the Open Data movement, interest in and demand for Big Data, expanded open source programming languages and tools, and the general explosion of DATA EVERYWHERE, everyone is clamoring for more of your government data. So the mandate has changed. And you, as the government worker who has for so long processed this survey the same way, now are being asked to provide that data in a variety of formats. You’re not familiar with those different file formats or tools, so you ask about training or maybe even hiring some additional staff. Unfortunately, that’s probably not going to happen. Demand for more (or better) data has not translated into more funds to train existing staff or hire new staff. For example, between fiscal years 2011 and 2013, the overall budget appropriation for the Census Bureau fell from $1.2 billion to $859.3 million, a decline of over 25 percent. (It’s hard to tell, but that may actually be an overstatement of the decrease, if there were still some extra funds in the 2011 appropriation to process the 2010 decennial census.) At the Bureau of Economic Analysis, the producer of the National Income and Product Accounts, total appropriations fell by a smaller amount: from $93 million in 2011 to $89.8 million in 2013.

I don’t believe that government agencies can’t or don’t want to make their data more accessible or are so overwhelmed by the technology that they’re unable to come up with solutions. Instead, I think many agencies have yet to adjust to a world that demands data, and demands that it be easily accessible at all times. It’s going to take time, money, and training for the government to catch up.

Let’s help communities build more secure, hopeful futures.

Today’s complex challenges demand smarter solutions. Urban brings decades of expertise to understanding the forces shaping people’s lives and the systems that support them. With rigorous analysis and hands-on guidance, we help leaders across the country design, test, and scale solutions that build pathways for greater opportunity.

Your support makes this possible.