Last week, the White House issued new guidance encouraging federal agencies to draw on evidence in their budget proposals. The new guidelines make it clear that requests backed by evidence and committed to innovation have a better chance of being fully funded.

It almost goes without saying today that policymaking should be evidence based. Scarce public dollars should go to programs that target real problems, operate efficiently, and demonstrably achieve their intended goals. But policymaking is a messy, iterative process, and the opportunities for evidence to inform decisions are numerous and varied.

Often, the conversation about “evidence-based policy” focuses too narrowly on a single question and a single step in the policymaking process: if an initiative or program hasn’t been proven effective, it’s not “evidence based” and it shouldn’t be implemented.

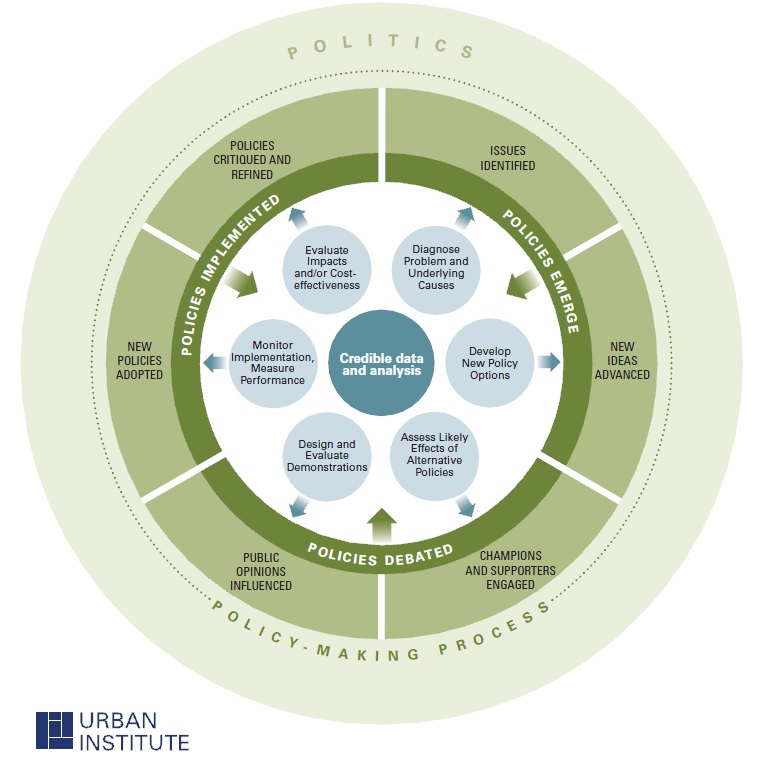

In reality, policy development occurs in multiple stages and extends over time. New policies emerge in response to problems and needs, possible approaches are advanced and debated, new policies are adopted and implemented, and established policies are critiqued and refined. Evidence can add value at every stage, but the questions decisionmakers need to answer differ from one stage to the next.

Random control trials—in which people are randomly assigned to participate in a program or serve as controls—are often referred to as the “gold standard” for evidence about whether a program is effective. And indeed, this approach is extremely powerful because it compares outcomes for a program’s participants with the outcomes comparable people achieve without the program.

But some programs can’t be effectively evaluated in this way. In particular, complex “place-based” interventions (like the new Promise Neighborhoods Initiative) aren’t good candidates for random control trials.

And other tools constitute the “gold standard” for delivering the evidence policymakers need to answer other questions. For example:

- microsimulation models (like the Urban-Brookings tax policy model) can forecast outcomes under a wide range of “what if” scenarios;

- administrative data from public agencies can be systematically linked and analyzed to answer questions about program design and implementation; and

- sometimes, fully diagnosing a complex problem, designing an innovative solution, or understanding exactly how a program should be implemented requires more nuanced, qualitative information gathered through in-person observation, in-depth interviews, or focus groups.

Instead of relying on a single tool, policymakers and practitioners should draw from a “portfolio” of tools to effectively advance evidence-based policy. Using the wrong tool may produce misleading information or fail to answer the questions that are most relevant when a decision is being made. Applying the right tool to the policy question at hand can inform public debate, help decisionmakers allocate scarce resources more effectively, and improve outcomes for people and communities.

Let’s help communities build more secure, hopeful futures.

Today’s complex challenges demand smarter solutions. Urban brings decades of expertise to understanding the forces shaping people’s lives and the systems that support them. With rigorous analysis and hands-on guidance, we help leaders across the country design, test, and scale solutions that build pathways for greater opportunity.

Your support makes this possible.